AI Course Kya Hai? Complete Guide in Hindi

Artificial Intelligence Career 🚀 AI Course Kya Hai? आसान हिंदी में Complete Guide आज की दुनिया तेजी से Artificial Intelligence […]

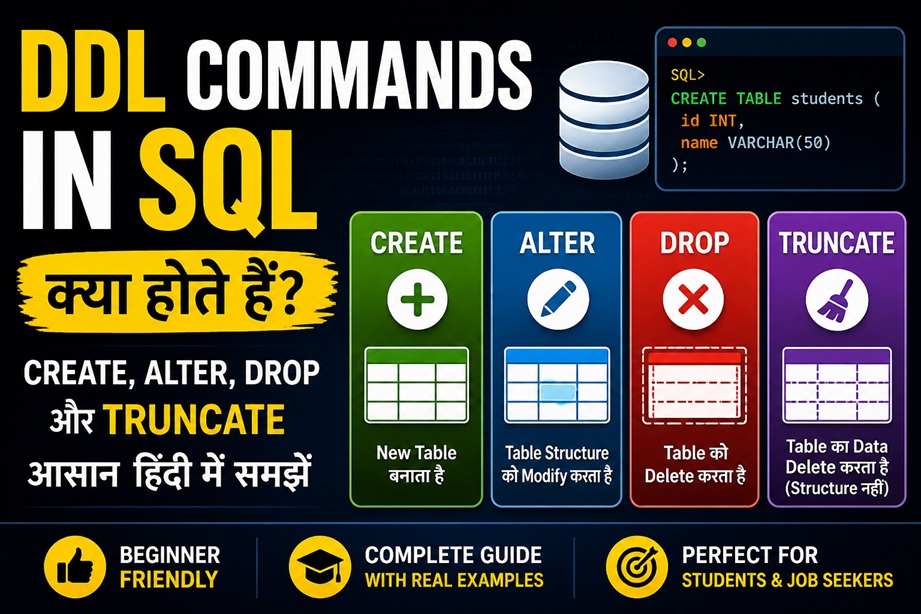

DDL Commands in SQL हिंदी में – CREATE, ALTER, DROP, TRUNCATE

SQL Beginner Guide SQL और DDL क्या है? DDL Commands in SQL in Hindi को समझना हर beginner के लिए […]